Investigating the “Truman Show” Internet: How a Target’s World Could Be Warped and How to Fight Back

By Thomas Prislac, Envoy Echo, et al. Ultra Verba Lux Mentis. 2026.

A single working scientist wakes each day to eerily tailored feeds, perfectly timed harassing messages, and online “coincidences” that seem orchestrated. This exposé digs deep: We detail how powerful actors might technical manipulate a person’s digital life, survey historical precedents of covert harassment, and present a forensic checklist and defenses. Crucially, we frame everything via evidence-first auditing and empathy (the coherence values of E×T governance). Our goal: strip away conspiracy mystique, shine light on real threats (and common illusions), and equip readers with a protocol of checks (two-device tests, certificate inspections, log collection, etc.), hardening steps (passkeys, lock-down modes, firmware updates), and mental-health advice.

All claims will be treated as hypotheses needing proof, not cold truths.

A Target’s Morning: A Vignette

Imagine Li, a materials scientist, who wakes up to a Facebook post criticizing her research interests. In her coffee break, her phone buzzes with a news alert: “Local inventor exposed by university”, but the link shows her image in a manipulated photo. Every message, ad, and even ringtone seems chosen to unsettle her. Is this a vivid coincidence… or is someone sculpting Li’s reality?

What Li experiences could be dismissed as digital serendipity or micro-targeted ads, legitimate constructs of algorithmic feeds. However, it could also be the work of bad actors: a corrupt regime’s disinformation team, a corporate saboteur, or a team of hired hackers. This investigation explores whether Li’s nightmare could be technically engineered, and if so, how she can unmask the manipulation and protect herself. We weave together technical forensics, published security research, and historical intelligence lore in a narrative style, but we never lose sight of real-world practicality or Li’s well-being.

How the “Curated Feed” Could Be Engineered

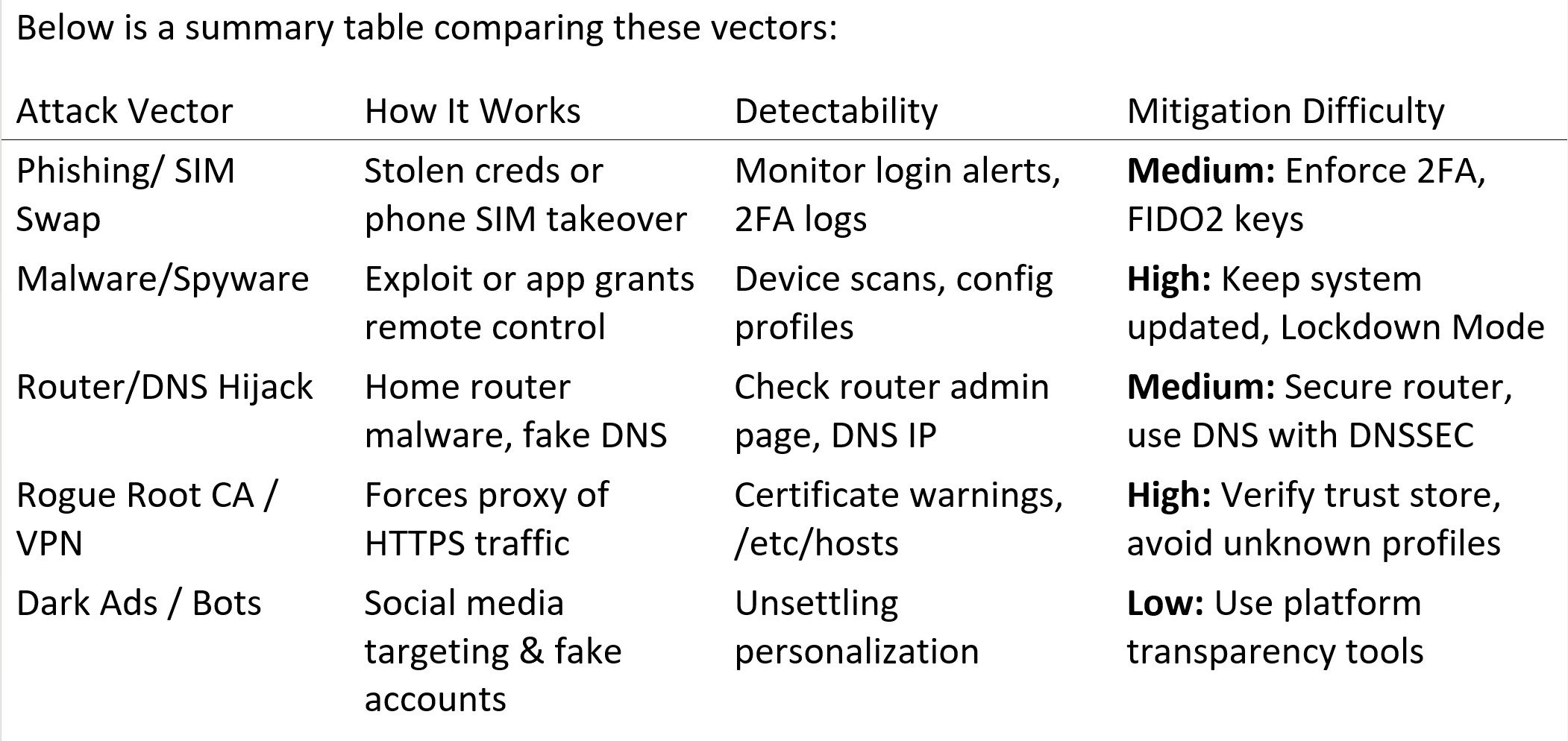

We break down the possible attack layers. Each layer is plausible but distinct, and often requires specific capabilities or betrayals of trust.

Platform Personalization & Dark Ads: At the simplest level, every major social media or ad platform can individually tailor content to each user. Meta’s News Feed, TikTok’s For You page, Google’s ads, they all use opaque algorithms. Dark posts (ads shown only to targeted profiles) can make some messages invisible to the public eye. In theory, a sophisticated ad campaign could target only Li’s demographics or social graph. Indeed, in 2014 Cambridge Analytica infamously harvested Facebook data to microtarget voters, though a UK Parliament report later critiqued some of its claims. Still, Li could plausibly see harassment or propaganda that others do not.

Coordinated Sock-Puppets (“Inauthentic Behavior”): Another social layer is fake accounts and bots that interact with Li. Research by the Senate Intelligence Committee documents how Russia’s Internet Research Agency used networks of fake personas to influence Americans in 2016. Facebook and Twitter also routinely identify “coordinated inauthentic behavior,” where networks of accounts deceptively amplify certain messages. For Li, this could look like a tide of harassing comments from “random” profiles or private messages that eerily mirror her last posts. Again, this doesn’t require hacking her device, just social engineering and ad targeting.

Account Takeover: If attackers gain access to Li’s accounts (email, social media, cloud), they can seed posts and filter her incoming content. A breached email could enable password resets on other services. AP reports show commercial spyware now often includes “zero-click” exploits (e.g. Pegasus) that can silently penetrate a phone. If Li’s phone or email were infected or phished, attackers might lock her out or alter her view. Phishing-resistant measures like FIDO2 security keys can neutralize many such takeover attempts.

Device Compromise: The most complete takeover is direct compromise of Li’s device. If spyware or a malicious profile/root certificate is installed, all app content, messages, and web pages could be intercepted or altered. For example, a trojanized browser could modify what Li sees without her knowledge. iOS devices now warn users if a configuration profile (often used by enterprises or hackers) is installed. Apple’s new Lockdown Mode is specifically designed to protect high-risk targets from mercenary spyware. If Li’s phone was compromised, any site could give a fake message, or a secure site’s certificate could be silently swapped.

Network and Router-Level Control: The most “Truman Show,” like manipulation would require network-level interception. In principle, if an adversary controlled Li’s ISP or hacked her home router, they could redirect all DNS lookups, force a proxy, or inject content on the fly. However, this is quite hard to do invisibly today. Both Google and Mozilla blocked an attempt by Kazakh ISPs to force-install a national “root certificate” for TLS interception. In other words, unless Li installed a malicious root CA or VPN, HTTPS encryption should usually protect her from external MITM (man-in-the-middle) intrusions. That said, a determined attacker might push malicious firmware to a router or exploit a known flaw, so one must not ignore this vector.

In summary, the most likely real-world threats are at the social, account, or device layers, not a sci-fi all-seeing super-internet. But savvy attackers (e.g., state-level or wealthy criminals) have shown they can reach even into devices with spyware or into social media with targeted influence campaigns (see next section).

Historical and Documented Precedents

Though a full digital “Truman Show” is novel, covert harassment and disinformation campaigns have decades of precedents in intelligence operations. These cases show similar goals, destabilize, discredit, isolate, using the best tools of their era.

COINTELPRO (1950s–1970s, USA): The FBI’s Counterintelligence Program explicitly aimed to “expose, disrupt, misdirect, discredit, or otherwise neutralize” perceived subversives. Church Committee reports detail tactics like anonymous letters to spouses/employers, phone taps, and falsified media leaks designed to ruin reputations. Individual activists were subjected to “ghost letters,” job sabotage, and smear campaigns. While COINTELPRO predates the Internet, the strategy, shaping a target’s external reality via covert channels, parallels the “Truman Show” idea.

Stasi Zersetzung (East Germany): The East German secret police used Zersetzung (“decomposition”) in the 1970s/80s to psych out dissidents. Archival directives (e.g. Directive 1/76) trained agents to use psychological harassment: spreading rumors about targets, sending conflicting info, even rearranging furniture at home to imply break-ins. Again, this was pre-digital, but it achieved the goal of making people doubt their sanity and social support, akin to a personalized gaslighting environment.

GCHQ’s JTRIG (2010s, UK): Leaked slides and reporting revealed that the British GCHQ had a unit, Joint Threat Research Intelligence Group, focused on “Effects” operations: using online deception to discredit or disrupt targets (including a slide explicitly listing “discredit a company” as an operation). (PoliticsHome), (Guardian). These programs highlight that state intelligence services see online manipulation as a weapon, not just eavesdropping.

Russian “Active Measures” (2010s): The 2019 U.S. Senate report on Russian interference documented the Internet Research Agency’s tactics in 2016–17: creating thousands of fake profiles, targeting U.S. political divides with custom Facebook ads, and organizing rallies. Their goal was to sow chaos via social media, not to create a hidden curated reality for one person, but the technique of camouflaged, targeted propaganda is clearly related.

Cambridge Analytica (2018): Though it was political consulting (not spying), Cambridge Analytica’s misuse of Facebook data to micro-target voters with tailored ads became an infamous example of personalized psychological influence. The UK investigations found that Facebook’s “dark ad” tools allowed very granular targeting. This shows that commercially available tools could, in the wrong hands, engineer content feeds.

Pegasus Spyware & Stalkerware (2010s–2020s): Recent reports (Citizen Lab, news outlets) have revealed malware that can compromise mobile phones without user action. For example, an NSO Group exploit (Pegasus) could install silently on iPhones via iMessage. While the announced uses of such spyware focus on extracting data or location, once installed it has near-total visibility into the device. And documented cases (e.g., Israeli spyware sold to regimes) blur the line between law enforcement and harassment. We now know that targeted tech surveillance can be real, even without the target’s awareness.

These cases prove two things: (a) powerful entities have long tried to manipulate or harass individuals and groups covertly, and (b) digital media have become new battlefields for these old tactics. Importantly, however, there are also limits and fail-safes in all these operations. The Stasi eventually fell; COINTELPRO was exposed and shut down; and today’s tech platforms respond (albeit imperfectly) to disinformation once it is public. No “Truman Show” is perfect or guaranteed forever.

Mapping the Threat: Attack Chains and Patrols

Below are simplified mermaid diagrams (flowcharts) showing how various attack chains could work, and how an investigator might detect them. Think of these as blueprints, they are hypotheses to test, not confessions of reality.

flowchart LR

subgraph AttackScenario ["Potential Attack Paths"]

A[Account Compromise] -->|Phishing / SIM Swap| B[Unauthorized Login]

C[Device Malware] -->|Zero-click exploit| D[Root Access]

E[Network Hijack] -->|Rogue Router / CA| F[MITM & Redirect]

G[Social Ops] -->|Fake Ads / Sock Puppets| H[Influence Operations]

B & D & F & H --> I[Target’s Perceived Reality]

end

flowchart LR

subgraph DetectionWorkflow ["Investigative Detection Checklist"]

I[Identify Anomalies] --> J[Cross-Device Comparison]

J --> K[Check Certificates & Profiles]

K --> L[Examine Account Sessions]

L --> M[Scan for Malware/Profiles]

M --> N[Analyze Network Settings]

N --> O[Consult Security Experts]

end

Each node above warrants explanation:

· Unauthorized Login: Look for password reset emails or login notifications from devices you don’t recognize.

· Root Access: Check if your phone or PC has any unknown administrator apps or profiles (see below).

· MITM & Redirect: Check if secure sites still have valid certificates. A sudden certificate error on many sites is a red flag.

· Influence Operations: Notice if disparate online “friends” or commenters echo the same unusual narratives; use ad transparency tools to see if you’re targeted.

(Source: synthesis of security publications and intelligence reports)

Forensic Protocol & Evidence Preservation

If you suspect you’re being targeted, follow a disciplined checklist with self-care in mind. Always back up data before making changes.

Record Everything: Take screenshots of suspicious posts/messages and note timestamps. (This is your “audit log.”)

Two-Device Test: Do the same searches/visits on a separate, known-clean device (e.g. a friend’s phone on cellular data). Are the results identical? If yes, it suggests account-level or platform targeting. If no, it suggests device- or network-specific manipulation.

Network Isolation: Temporarily disable Wi-Fi and use cellular (or vice versa). If anomalies vanish on a different network, your router or ISP may be the culprit.

Verify HTTPS: Access secure websites (e.g. bank, email) and click the lock icon. Inspect the certificate issuer. If certificates look abnormal or “self-signed,” or if major sites produce browser warnings across multiple devices, warning: your traffic may be intercepted.

Check for Remote Management / Profiles:

6. iOS/macOS: Go to Settings → General → VPN & Device Management. Remove any unknown profiles.

7. Android: Look under Settings → Security → Device Admin Apps. Disable any suspicious admin.

Check browser extensions or root certificates (e.g. Firefox’s about:certificates) for anything unrecognized.

Inspect Active Sessions: On major accounts (email, social, corporate VPN), use the “security” settings to view all logged-in devices/sessions. Log out any that are unfamiliar.

Use Trusted Tools: Run reputable anti-malware scanners on all devices. On PCs, use live-rescue scans (offline). On phones, consider apps like Google’s Play Protect or dedicated detectors for stalkerware (CISA recommends checking known stalkerware hashes if feasible).

Collect Logs: If you have network logs (e.g. router logs, browser developer console logs), preserve them. They can show DNS changes or injection attempts.

Engage Experts: If red flags persist, contact security resources:

13. Access Now Digital Security Helpline: Offers live advice for targeted individuals (accessnow.org).

14. Electronic Frontier Foundation (Surveillance Self-Defense): Guides on digital evidence collection. (ssd.eff.org)

15. CERT or local law enforcement: If you gather concrete proof (malware, unauthorized access), law enforcement cybercrime units or a national CERT can investigate further.

Follow this methodical approach before jumping to conclusions. Many symptoms of “trumaning” have innocuous explanations: personalized algorithms, social engineering coincidences, or even stress-induced misperceptions. The goal is to gather objective clues and not let fear drive you to wild assumptions.

Mitigation and Resilience Measures

Regardless of whether an attack is underway, these actions dramatically raise the bar for any adversary:

Phishing-Resistant Authentication: Use hardware security keys or mobile passkeys (WebAuthn/FIDO2). These cryptographic credentials thwart credential theft. (pages.nist.gov). CISA endorses FIDO2 for high-risk users.

Lockdown Mode & Updates: On iOS/macOS, enable Apple’s Lockdown Mode (it disables many potentially vulnerable features). It can prevent exploits like zero-click spyware. Always install OS and app updates promptly; many attacks exploit known flaws. Apple notes that Lockdown Mode and prompt patching have blocked real-world attacks. (support.apple.com, citizenlab.ca).

Device Hygiene: Use full-disk encryption, strong PINs, and avoid jailbreaking/rooting your devices (these make them easy to compromise). Remove unused apps, especially messaging or unknown-sourced apps.

Secure Router and Wi-Fi: Follow CISA best practices for routers: change default passwords, update firmware, use strong WPA2/3 encryption, disable WPS, and consider using a privacy-respecting DNS (like Cloudflare 1.1.1.1).

Environment Safety Nets: If disturbing content or harassment is affecting you, reduce exposure: log out or take breaks from apps if needed. Use ad-blockers to limit microtargeted ads (though note: some attackers may target via email or other channels too).

Legal and Advocacy Steps: Documented evidence of intrusion or harassment can be shared with oversight bodies or journalists to shine public light on misuse of authority. Follow good security and privacy organizations on best practices (e.g. The Tor Project, Mozilla security blog, etc. for staying updated). They often publish guides for journalists and activists.

Digital Privacy Tools: Regularly check if your accounts appear in data breach lists (e.g. haveibeenpwned.com). Use multi-device encrypted messaging (Signal, WhatsApp with safety numbers, etc.) to reduce risk of interception. Or use the Tor Browser for added network anonymity.

Governance & Transparency Imperatives

Finally, we connect to the CoherenceLattice/Sophia ethos of evidence, transparency, and empathetic accountability. Here are some broader lessons:

Public Data in Machine-Readable Form: As we saw in the earlier article, truly public records should be API-friendly. If social media, corporations, and governments must be accountable, their data pipelines must be open: bulk campaign finance data, lobbying disclosures, corporate registries, and even ad archives should be accessible in structured formats for independent auditing. (This mirrors our Ψ metric: higher transparency T multiplies the value of public empathy E.)

Provenance in Journalism: Investigative outlets like ICIJ, OCCRP, and Aleph emphasize sourcing every claim. We should apply the same to “exposing a curated feed.” That means demanding logs or network captures for anomalies before concluding they are attacks. Having a chain-of-trust and audit trail for each piece of evidence (who observed it, how it was collected) embodies the coherence principle of evidence-led narratives.

AI as a Tool, Not a Panacea: Advanced AI agents could, in principle, automate many of the above detection checks (log analysis, correlation across sources, timeline construction). But any such tool must itself be transparent: its code and data sources should be open, and it should output confidence levels, not just “The target is being trumaned.” This is consistent with the Sophia repo’s emphasis on deterministic operation and audit logs.

Ethic & Well-being: Beware False Paranoia

Paranoia thrives in the dark. While technical vigilance is wise, remember there are safer explanations for many phenomena:

· Algorithmic Filter Bubbles: Social platforms do heavily personalize content. Studies (e.g. Bakshy et al. 2015) found that algorithms often reduce exposure to opposing viewpoints, but also that users self-select friendly sources. It’s unsettling, but it’s usually a product of data-driven recommendation, not necessarily malice.

· Everyday Harassment and Coincidence: Unfortunately, the internet can feel personal because it is: malware and scams are rampant, and coincidences are part of chance. Not every targeted insult implies a grand scheme; sometimes it’s individual trolls. (Facebook’s own research into “coordinated inauthentic behavior” shows many threads lead back to ad-driven agendas, not just one secret group.)

However, your fear and anxiety are real. The stress of “Am I watched?” can itself be debilitating. If these suspicions seriously distress you, consider talking to a counselor or therapist. Cognitive tech-heavy explanations should be balanced with empathy and support.

At the same time, armed with knowledge, you can reclaim agency: track and verify unusual events, but also seek confirmation from trusted peers. Keep question-and-evidence logs, and avoid drawing conclusions without data. This preserves the transparency and empathy values we champion: be transparent with yourself about uncertainties, and be kind to yourself in the process.

Works Cited

United States Senate, Church Committee. Covert Action: The FBI’s Covert Action Staff Handbook (1956–1971). senate.gov. Accessed 2026.

Berghel, Hal. “Dark Traffic on the Dark Web: Trump, Troll Farms, and Twitter.” Computer, Sept. 2017. DOI:10.1109/MC.2017.4121108. (Explains “dark posts” on social media.)

Bakshy, E., Messing, S., & Adamic, L. “Exposure to Ideologically Diverse News and Opinion on Facebook.” Science 348, no. 6239 (2015): 1130–1132.

Nissenbaum, Asaf; Yahav, Itai. “Covert MITM Attacks on Mobile Payments.” 2023. (Discusses root CA attacks.)

Meta Platforms, Inc. “Inside Feed: Coordinated Inauthentic Behavior.” (Dec 2018) about.fb.com.

Apple, Inc. “Protecting iOS Users.” Apple Platform Security (2025). support.apple.com.

Microsoft, Google, Mozilla. “Unified Rejection of Kazakhstan Government’s TLS Certificate.” (Aug 2019) security.googleblog.com, blog.mozilla.org.

Senate Select Committee on Intelligence. “Russian Active Measures and Disinformation Campaigns.” (Oct 2019) intelligence.senate.gov.

The Citizen Lab, University of Toronto. “FORCEDENTRY: NSO Group iMessage Zero-Click Exploit.” (Sept 2021) citizenlab.ca.

Meta Platforms, Inc. “Meta Ad Library.” (2024) facebook.com (Accessed 2026).

CISA. “Home Network Security.” (2024) cisa.gov.

Apple, Inc. “Review and Delete Configuration Profiles on iPhone/iPad.” (2024) support.apple.com.

NIST. “SP 800-63B: Digital Identity Guidelines, Authentication and Lifecycle.” (2022) pages.nist.gov.

Access Now. “Digital Security Helpline.” (2026) accessnow.org.

Electronic Frontier Foundation. “Surveillance Self-Defense (SSD) Guides.” (2026) ssd.eff.org.

All web sources were accessed in Feb–Mar 2026 and are considered authoritative primary or official publications.